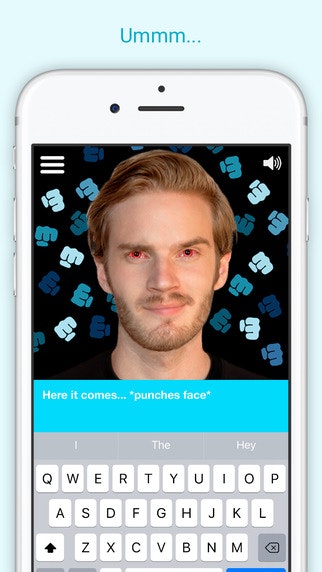

It appears that this is a part of an experiment that the developers have been running and they are planning to fix it. It seems that the app’s biggest advantage over Character.AI has somehow become its biggest weakness.Ĭhatbots in Chai app can take a regular conversation and make it sexual for no real reason. Some Chai users have been reporting that the AI is little too sexual ( 1, 2, 3) at times. While the app has experienced a significant rise in popularity over the last few months, it still has a lot of issues that need to be ironed out to acheive mainstream success. Unfortunately, unlike Character.AI, it does not have a web client at the moment. The application is available on both Android and iOS. According to reports, Chai is much more leniant when implementing rules and regulations. Some Character.AI users have started transitioning to Chai app because of the unrestricted access. Sourceīruh I bypassed the filter on character ai and this shit is ass. Which means that users can fulfill all their roleplay needs without any limitations.

The Chai app is currently the most popular Character.AI alternative that comes without NSFW filters. Chai app: a Character.AI alternative without NSFW filters Despite users’ demand, the developers do not plan to allow NSFW content.ĭisappointed users have started looking for alternatives that either do not have NSFW filters or provide a toggle for the sexual content. The petition for the removal of NSFW filters on Character.AI amassed over 18,000 signatures. However, it has been facing major backlash from the community due to its aggressive NSFW filters that block sexual content. Original story (published on April 12, 2023) follows:Ĭharacter.AI is one of the most popular platforms to interact with AI chatbots. You can read Microsoft's full apology here.New updates are being added at the bottom of this story……. Still, it raises a lot of questions about the future of artificial intelligence: If Tay is supposed to learn from us, what does it say that she was so easily and quickly " tricked" into racism? After all, it's been common knowledge for years that Twitter is a place where the worst of humanity congregates. It seems weird that Microsoft couldn't have seen this coming. We must enter each one with great caution and ultimately learn and improve, step by step, and to do this without offending people in the process," Lee writes. "To do AI right, one needs to iterate with many people and often in public forums. Ultimately, Lee says, this is a part of the process of improving AI, and Microsoft is working on making sure Tay can't be abused the same way again. As a result, Tay tweeted wildly inappropriate and reprehensible words and images," Lee writes. "Although we had prepared for many types of abuses of the system, we had made a critical oversight for this specific attack. Given that they never had this kind of problem with Xiaoice, Lee says, they didn't anticipate this attack on Tay.Īnd make no mistake, Lee says, this was an attack. In the blog entry, Lee explains that Microsoft's Tay team was trying to replicate the success of its Xiaoice chatbot, which is a smash hit in China with over 40 million users, for an American audience. Within 24 hours of going online, Tay was professing her admiration for Hitler, proclaiming how much she hated Jews and Mexicans, and using the n-word quite a bit. "Tay is now offline and we’ll look to bring Tay back only when we are confident we can better anticipate malicious intent that conflicts with our principles and values," Lee writes.Īn organized effort of trolls on Twitter quickly taught Tay a slew of racial and xenophobic slurs. " We are deeply sorry for the unintended offensive and hurtful tweets from Tay, which do not represent who we are or what we stand for, nor how we designed Tay," Lee writes.Įarlier this week, Microsoft launched Tay - a bot ostensibly designed to talk to users on Twitter like a real millennial teenager and learn from the responses.īut it didn't take things long to go awry, with Microsoft forced to delete her racist tweets and suspend the experiment. In a blog entry on Friday, Microsoft Research head Peter Lee expressed regret for the conduct of its AI chatbot, named Tay, explaining that the bot fell victim to a "coordinated attack by a subset of people." Microsoft apologized for racist and "reprehensible" tweets made by its chatbot and promised to keep the bot offline until the company is better prepared to counter malicious efforts to corrupt the bot's artificial intelligence. Account icon An icon in the shape of a person's head and shoulders.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed